How a National AI Lab used Collinear's Simulation Lab to align a frontier Arabic-English model family

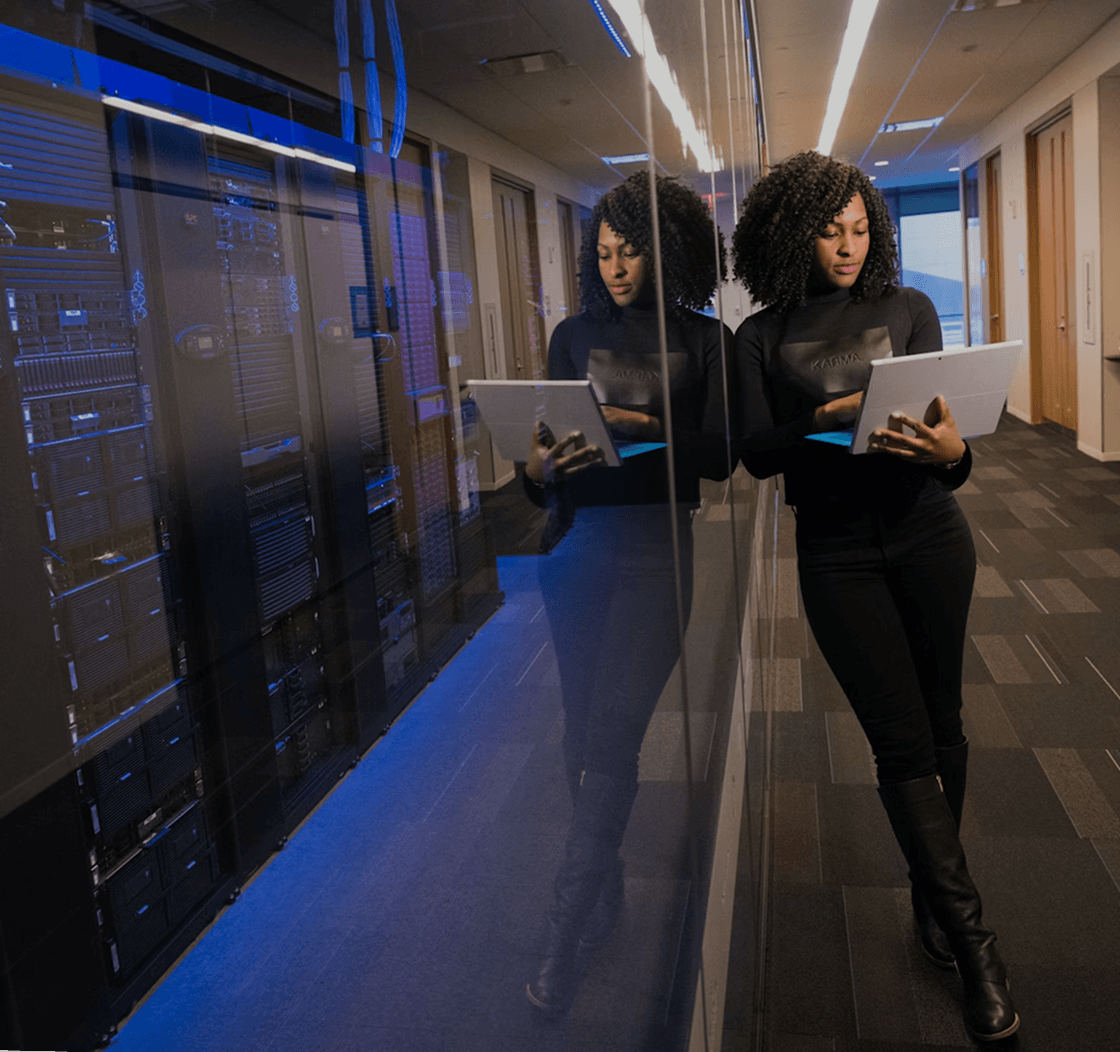

A state-backed AI lab in the Middle East built a frontier multi-lingual model family for Arabic and English, spanning four model sizes (7B, 13B, 34B, 70B). The models needed to ship across business domains including safety, instruction following, and enterprise workflows, serving hundreds of millions of Arabic speakers across dialects.

Before deployment, the lab needed to benchmark and improve performance across all four model sizes and 16 business domains, without relying on manual evaluation at scale.

Aligning a bilingual Arabic-English model family for production across government services, education, and commercial applications presented problems that standard approaches couldn't solve.

No Arabic safety infrastructure existed. Arabic AI safety isn't a translation of English safety. The models needed to handle the interplay between Islamic values and AI outputs in ways that Western safety taxonomies don't cover. Culturally specific manipulation attempts required dedicated testing.

Dialect coverage at scale. Consistent performance across Modern Standard Arabic and regional dialects, while maintaining language quality in each. Most existing benchmarks don't test for this.

Four model sizes, sixteen domains. Every model variant (7B through 70B) needed evaluation across all 16 business domains. Manual red-teaming at that volume was not feasible.

Government-grade deployment bar. The models support critical national infrastructure. Safety and reliability standards were set by government requirements, not industry benchmarks.

The lab used Collinear's Simulation Lab to build a structured benchmarking and alignment pipeline across all four model sizes.

55k+ simulations per model family. The Simulation Lab generated tens of thousands of targeted scenarios across all 16 business domains, covering safety, instruction following, and enterprise workflows in both Arabic and English. This replaced what would have been months of manual evaluation.

Automated failure mode detection across 16 categories. Every simulated interaction was scored through the Simulation Lab's verification engine, identifying 1k+ examples of specific failure modes per category. The lab had a structured, auditable map of where each model variant was vulnerable.

10B tokens of post-training data. The high-signal data generated through simulation fed directly into post-training alignment, enabling targeted improvement on the specific categories and languages where models underperformed. No wasted compute on areas already performing well.

- 10,000+ issues identified in Arabic in pre-production, enabling targeted remediation before deployment across any model size.

- ~1/7th the failure rate of comparable open-source models. Where baseline models showed a 72.6% failure rate on Arabic-language manipulation attempts, the lab's models achieved a fraction of that after alignment with simulation-generated data.

- 16 business domains evaluated systematically across all four model sizes, producing structured scorecards that enabled the lab to demonstrate reliability for government and enterprise deployments.

- 55k+ simulations per model family replaced manual red-teaming with a repeatable, scalable evaluation pipeline that works across every model variant.

{{quote1}}

With the safety and alignment foundation established, the lab is extending the same Simulation Lab pipeline to newer, more capable model variants, ensuring that as capabilities scale, evaluation and safety keep pace.

See what Collinear's Simulation Lab can do for your team.

Better simulations.

Better data. Better agents.

See what a thousand rollouts can teach your agent in 30 minutes.