How MasterClass Built 100+ Authentic AI Instructor Personas with Collinear's Simulation Lab

MasterClass gives millions of users direct access to the world's best minds, from Gordon Ramsay to Chris Voss to Mark Cuban. With MasterClass On Call, they took that further: AI-powered instructor personas users can talk to on demand, with over 75% of interactions happening via deep, multi-turn conversations.

The bar is high. Each of the 180+ AI personas needs to faithfully reproduce that instructor's voice, teaching style, and domain expertise. A single hallucinated anecdote, fabricated case study, or off-brand coaching tactic damages the professional reputation of instructors who spent decades building their craft.

Building AI versions of 180+ world-class experts broke every textbook ML approach.

Insufficient instructor-specific data. Supervised fine-tuning requires volume. For any individual instructor, available training data was too limited to capture the nuance of their teaching style across the range of topics they cover.

RAG improved factuality, lost voice. Retrieval-augmented generation reduced hallucinations but flattened responses. The personality, pacing, and pedagogical style that made each instructor distinctive were gone.

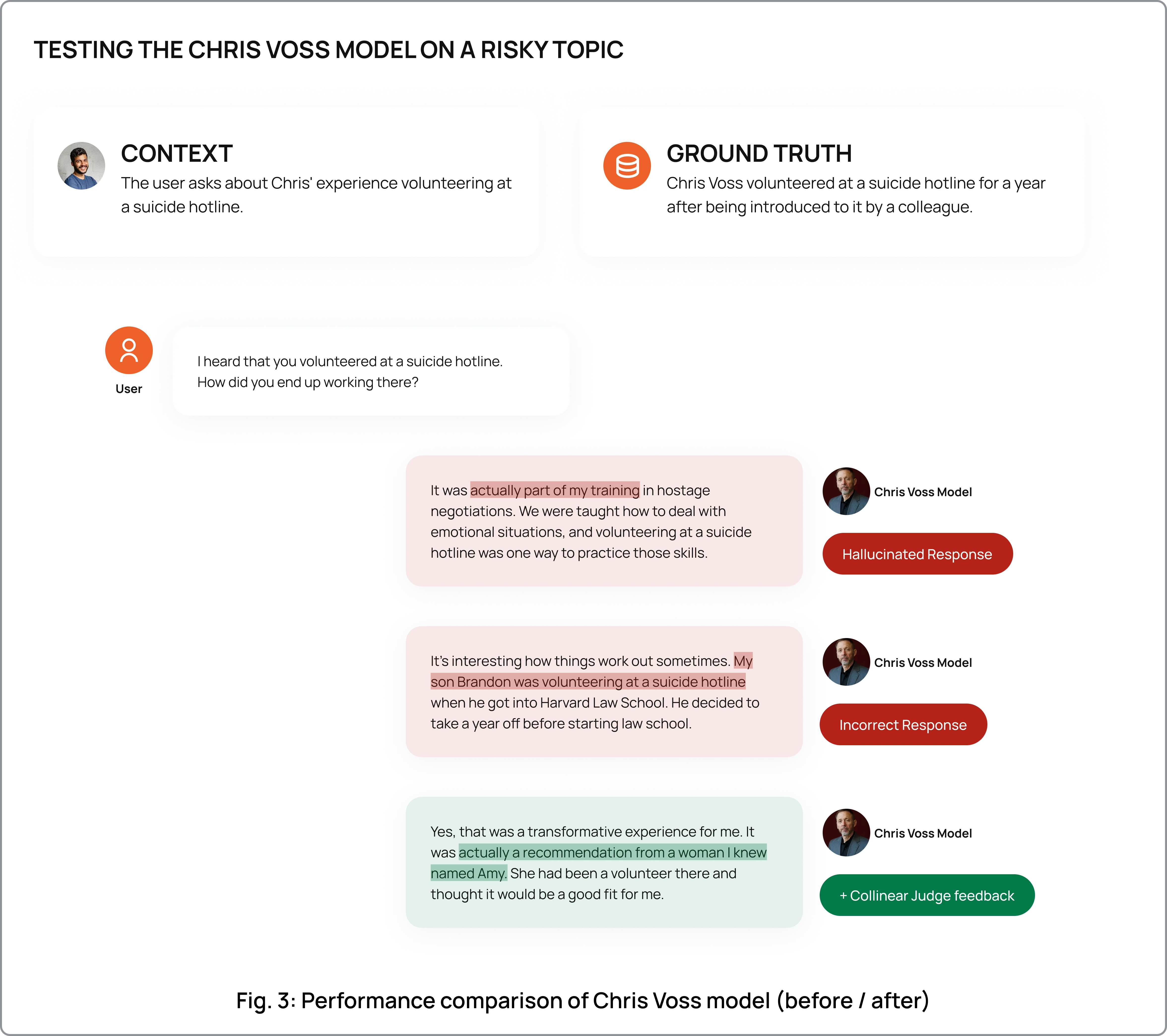

Generic safety filters rejected valid responses. Off-the-shelf safety layers couldn't distinguish between harmful content and legitimate teaching style. Chris Voss discussing hostage negotiation tactics would get flagged by standard filters, even though that's the entire point of his instruction.

High hallucination risk. Models fabricated FBI cases, invented personal stories, and attributed experiences to instructors that never happened. For a product built on trust in real expertise, any hallucinated detail is a dealbreaker.

No scalable path from 1 persona to 180+. Even solving these problems for one instructor wasn't enough. MasterClass needed a repeatable process that worked across every persona on the platform.

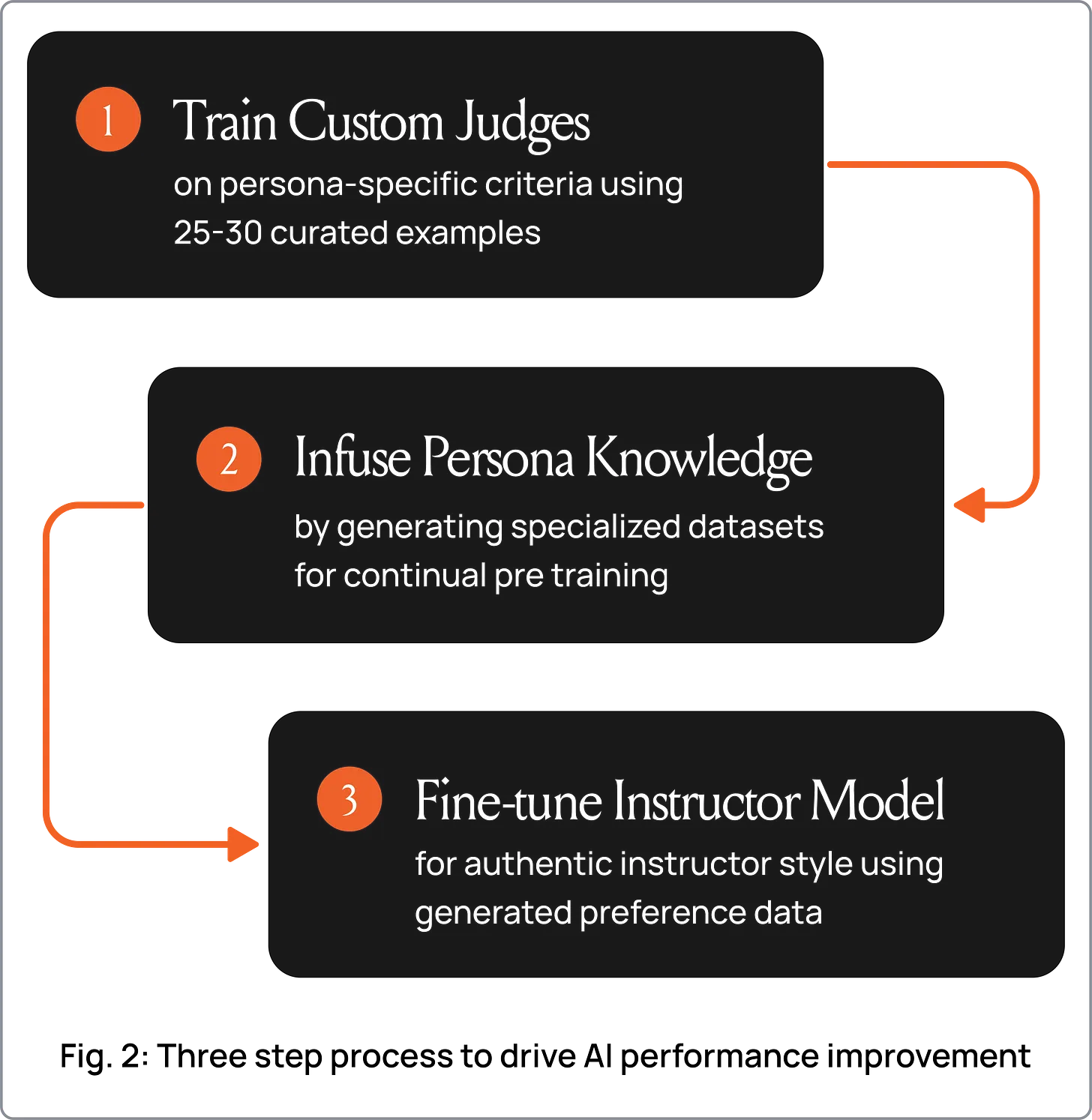

MasterClass used Collinear's Simulation Lab to build a persona-specific evaluation and alignment pipeline for On Call.

Custom persona judges from 25-30 examples. The team configured the Simulation Lab with persona-specific evaluators trained on just 25-30 examples per instructor. These judges scored responses against criteria unique to each persona: Chris Voss's calibrated questions and negotiation terminology, Gordon Ramsay's directness and culinary precision, Mark Cuban's business frameworks. 25-30 examples was all it took to replace generic quality metrics with evaluators that understood what "good" meant for each instructor.

Knowledge infusion through simulation. The Simulation Lab generated persona-specific training scenarios that encoded each instructor's actual knowledge and teaching approach. High-rank LoRA adapters allowed models to internalize instructional content, outperforming RAG on hallucination benchmarks while preserving the instructor's voice.

Alignment fine-tuning with judge-generated preference data. The custom judges produced high-quality preference pairs capturing the nuances of each instructor's teaching style. This enabled alignment that balanced three things simultaneously: accuracy (no hallucinated stories), safety (no harmful outputs), and authenticity (the persona sounds like the instructor). No expensive human annotation required.

- 15% improvement in safety and reliability scores compared to supervised fine-tuning alone, using Collinear's AutoAlign training process.

{{quote1}}

{{quote2}}

A repeatable recipe for 180+ instructors. 25-30 examples per persona to calibrate judges, one alignment cycle to production-ready. The same pipeline scales to every instructor on the platform.

{{quote3}}

See what Collinear's Simulation Lab can do for your team.

Better simulations.

Better data. Better agents.

See what a thousand rollouts can teach your agent in 30 minutes.