How ServiceNow's Apriel-1.5-15B beats 10x larger models with Collinear's Simulation Lab

~50%

fewer training tokens

70B

performance in a 15B model

~1.8×

faster SFT cycles

$10M+

annualized savings

~50%

fewer training tokens

70B

performance in a 15B model

~1.8×

faster SFT cycles

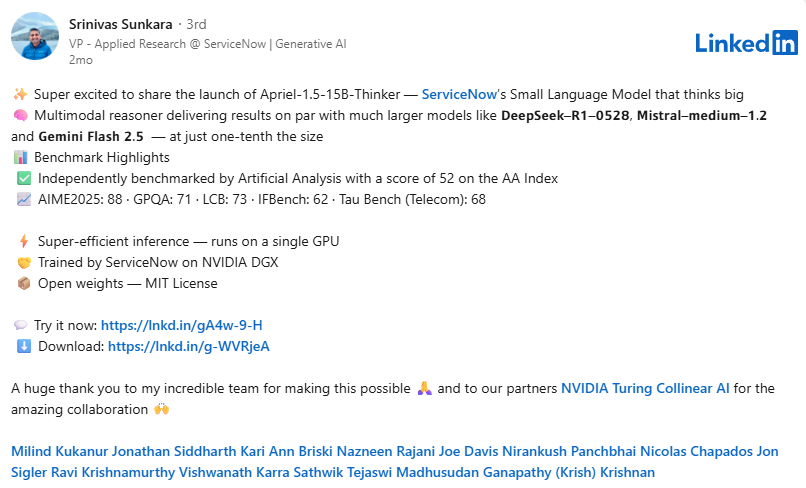

ServiceNow is embedding AI into the core of its platform, powering intelligent workflows across IT, HR, and customer service for thousands of enterprises globally. Central to that effort is Apriel, ServiceNow's open-weight model family built by its SLAM Labs research team. Over the course of 2025, SLAM Labs shipped six models and two training frameworks, including Apriel-1.5-15B-Thinker, a reasoning model that achieves frontier-level performance on a single GPU.

Getting there required a fundamentally different approach to training. Scaling up model size and data volume had run its course. ServiceNow needed to make a 15B-parameter model perform like models 4-5x its size, and do it efficiently enough to sustain rapid iteration across the full Apriel family. That meant the bottleneck was beyond just compute. They needed quality and signal density of training data.

Challenge

As ServiceNow pushed its GenAI initiatives from pilots to production, the strategy of training bigger models on bigger datasets ran into clear limits.

- Rising costs and slower cycles. Escalating GPU spend and longer training runs made rapid iteration unsustainable. Each cycle cost more and delivered diminishing returns.

- Noisy synthetic data. The synthetic datasets being used carried too many low-signal or incorrect examples. Volume was going up, but training efficiency was going down.

- Performance plateau. Accuracy gains from scaling were flattening despite increasing investment. Throwing more data and more compute at the problem had stopped working. ServiceNow needed to break the plateau without continuing to scale up. The goal was specific: achieve frontier-class reasoning in a 15B model that could run on a single GPU, at a fraction of the cost of larger alternatives.

Solution

ServiceNow used Collinear's Simulation Lab to replace noisy synthetic data with high-signal training environments for agentic reasoning, code, and math.

- High-fidelity simulated environments. The Simulation Lab generated realistic scenarios across the domains that mattered most to Apriel's post-training: agentic workflows, code generation, and mathematical reasoning. Instead of filtering through massive synthetic datasets to find usable examples, the simulation environment produced high-signal interactions by design.

- Built-in verification. Every simulated interaction was scored for correctness, coherence, and instruction-following through the Simulation Lab's verification engine. Low-signal and incorrect examples were caught programmatically, eliminating the noise that had been dragging down training efficiency.

- A repeatable improvement loop. Each round of simulation produced a traceable, auditable dataset that the SLAM Labs team could benchmark and reuse across the Apriel model family. What had been an ad hoc process of gathering and cleaning synthetic data became a structured pipeline: simulate, verify, train, measure, repeat.

Results

By training on high-signal simulation-generated data, ServiceNow achieved large-model performance at a fraction of the cost and time.

- ~50% fewer tokens and compute with no loss in benchmark accuracy.

- 15B model matched 32B-70B baselines on complex agentic reasoning and coding tasks. Apriel-1.5-15B-Thinker runs on a single GPU while consuming 40% fewer tokens than comparable models.

- ~1.8x faster supervised fine-tuning cycles, accelerating iteration and enabling SLAM Labs to ship six models in a single year.

- $10M+ in annualized savings from lower training costs and smaller production models.

ServiceNow's Apriel-1.5-15B-Thinker on HuggingFace

See what Collinear's Simulation Lab can do for your team.

Book a demo

- 70B-level performance in a 15B model

- ~50% fewer training tokens

- $10M+ annualized GPU savings

ServiceNow is embedding AI into the core of its platform, powering intelligent workflows across IT, HR, and customer service for thousands of enterprises globally. Central to that effort is Apriel, ServiceNow's open-weight model family built by its SLAM Labs research team. Over the course of 2025, SLAM Labs shipped six models and two training frameworks, including Apriel-1.5-15B-Thinker, a reasoning model that achieves frontier-level performance on a single GPU.

Getting there required a fundamentally different approach to training. Scaling up model size and data volume had run its course. ServiceNow needed to make a 15B-parameter model perform like models 4-5x its size, and do it efficiently enough to sustain rapid iteration across the full Apriel family. That meant the bottleneck was beyond just compute. They needed quality and signal density of training data.

Challenge

As ServiceNow pushed its GenAI initiatives from pilots to production, the strategy of training bigger models on bigger datasets ran into clear limits.

- Rising costs and slower cycles. Escalating GPU spend and longer training runs made rapid iteration unsustainable. Each cycle cost more and delivered diminishing returns.

- Noisy synthetic data. The synthetic datasets being used carried too many low-signal or incorrect examples. Volume was going up, but training efficiency was going down.

- Performance plateau. Accuracy gains from scaling were flattening despite increasing investment. Throwing more data and more compute at the problem had stopped working. ServiceNow needed to break the plateau without continuing to scale up. The goal was specific: achieve frontier-class reasoning in a 15B model that could run on a single GPU, at a fraction of the cost of larger alternatives.

Solution

ServiceNow used Collinear's Simulation Lab to replace noisy synthetic data with high-signal training environments for agentic reasoning, code, and math.

- High-fidelity simulated environments. The Simulation Lab generated realistic scenarios across the domains that mattered most to Apriel's post-training: agentic workflows, code generation, and mathematical reasoning. Instead of filtering through massive synthetic datasets to find usable examples, the simulation environment produced high-signal interactions by design.

- Built-in verification. Every simulated interaction was scored for correctness, coherence, and instruction-following through the Simulation Lab's verification engine. Low-signal and incorrect examples were caught programmatically, eliminating the noise that had been dragging down training efficiency.

- A repeatable improvement loop. Each round of simulation produced a traceable, auditable dataset that the SLAM Labs team could benchmark and reuse across the Apriel model family. What had been an ad hoc process of gathering and cleaning synthetic data became a structured pipeline: simulate, verify, train, measure, repeat.

Results

By training on high-signal simulation-generated data, ServiceNow achieved large-model performance at a fraction of the cost and time.

- ~50% fewer tokens and compute with no loss in benchmark accuracy.

- 15B model matched 32B-70B baselines on complex agentic reasoning and coding tasks. Apriel-1.5-15B-Thinker runs on a single GPU while consuming 40% fewer tokens than comparable models.

- ~1.8x faster supervised fine-tuning cycles, accelerating iteration and enabling SLAM Labs to ship six models in a single year.

- $10M+ in annualized savings from lower training costs and smaller production models.

ServiceNow's Apriel-1.5-15B-Thinker on HuggingFace

See what Collinear's Simulation Lab can do for your team.

Book a demo

.png)